Zeitpunkt Nutzer Delta Tröts TNR Titel Version maxTL Do 25.07.2024 00:00:01 191.332 -2 9.280.821 48,5 Mastodon 4.3.0... 500 Mi 24.07.2024 00:00:01 191.334 -76 9.270.150 48,5 Mastodon 4.3.0... 500 Di 23.07.2024 00:00:02 191.410 -56 9.260.118 48,4 Mastodon 4.3.0... 500 Mo 22.07.2024 00:00:05 191.466 -2 9.249.533 48,3 Mastodon 4.3.0... 500 So 21.07.2024 00:00:02 191.468 -2 9.240.281 48,3 Mastodon 4.3.0... 500 Sa 20.07.2024 00:00:03 191.470 0 9.231.235 48,2 Mastodon 4.3.0... 500 Fr 19.07.2024 13:57:40 191.470 -7 9.224.706 48,2 Mastodon 4.3.0... 500 Do 18.07.2024 00:00:04 191.477 -2 9.210.826 48,1 Mastodon 4.3.0... 500 Mi 17.07.2024 00:00:03 191.479 -29 9.202.399 48,1 Mastodon 4.3.0... 500 Di 16.07.2024 00:00:04 191.508 0 9.192.694 48,0 Mastodon 4.3.0... 500

Thomas Vitale ☀️ (@thomasvitale) · 10/2022 · Tröts: 134 · Folger: 439

Do 25.07.2024 07:12

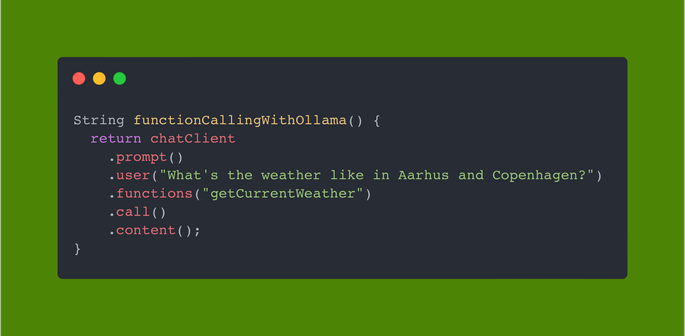

Ollama has recently added support for tools/function calling, and you can already use the new feature with Spring AI in your Java applications 🤩 I can run all my LLM apps on local, open-source, and private models like Mistral now! Full example here: https://github.com/ThomasVitale/llm-apps-java-spring-ai/tree/main/07-function-calling/function-calling-ollama

Spring AI provides a dedicated integration with the Ollama APIs. (https://docs.spring.io/spring-ai/reference/api/chat/ollama-chat.html). Ollama also provides an OpenAI-compatible API, which you can also use via the Spring AI OpenAI module.

String functionCallingWithOllama() { return chatClient .prompt() .user("What's the weather like in Aarhus and Copenhagen?") .functions("getCurrentWeather") .call() .content(); }

[Öffentlich] Antw.: 0 Wtrl.: 0 Fav.: 0 · via Web